Abstract

A topological conception of "bootstrap" proofs Berkson bias in nutritional epidemiology

Author(s): G├ā┬│mez Gonz├ā┬Īlez, Carmen1; Pe├ā┬▒a Rodr├ā┬Łguez, Amelia2; Salas D├ā┬Łaz, Inmaculada3; Praena Fern├ā┬Īndez, Juan Manuel4; G├ā┬Īlvez Acebal, Juan5; Lozano Rodr├ā┬Łguez, Jes├ā┬║s6; Vilches Arenas, ├ā┬üngel 7; Ortega Calvo, Manuel8

Introduction: The common semantic core for all uses of “bootstrapping” is the realization of a complex task by practicing a simple gesture (an individual and his horse can take a big leap after only rider has been thrown the bootlaces). The “bootstrapping” is a statistical method designed to estimate the sampling distribution of an estimator by re-sampling with replacement

Methodology: Trying to compensate for epistemological weaknesses of sample size calculations should be obtained by the researchers the smallest possible values of the sampling relative error or design effect. On the other hand, we can also create a virtual universe (VU) by a topological placing of samples obtained by “bootstrap”.

Results: VU size will be approximately equal to the number of repeats multiplied by the size of the original sample. In frequentist terms we can issue an equality hypothesis (H0) and another of inequality (H1) between our VU and the actual population (AP) from which comes the sample. To support these hypotheses we have developed a practical demonstration of Berkson bias in a case-control design by bootstrap resampling.

Conclusion: We stand for a topological concept of resampling with “the bootstrap” that can extend the hierarchic external validation scheme proposed by Justice et al. to a 0.1 level just to the embodiment of the simulator effect on the original data package study.

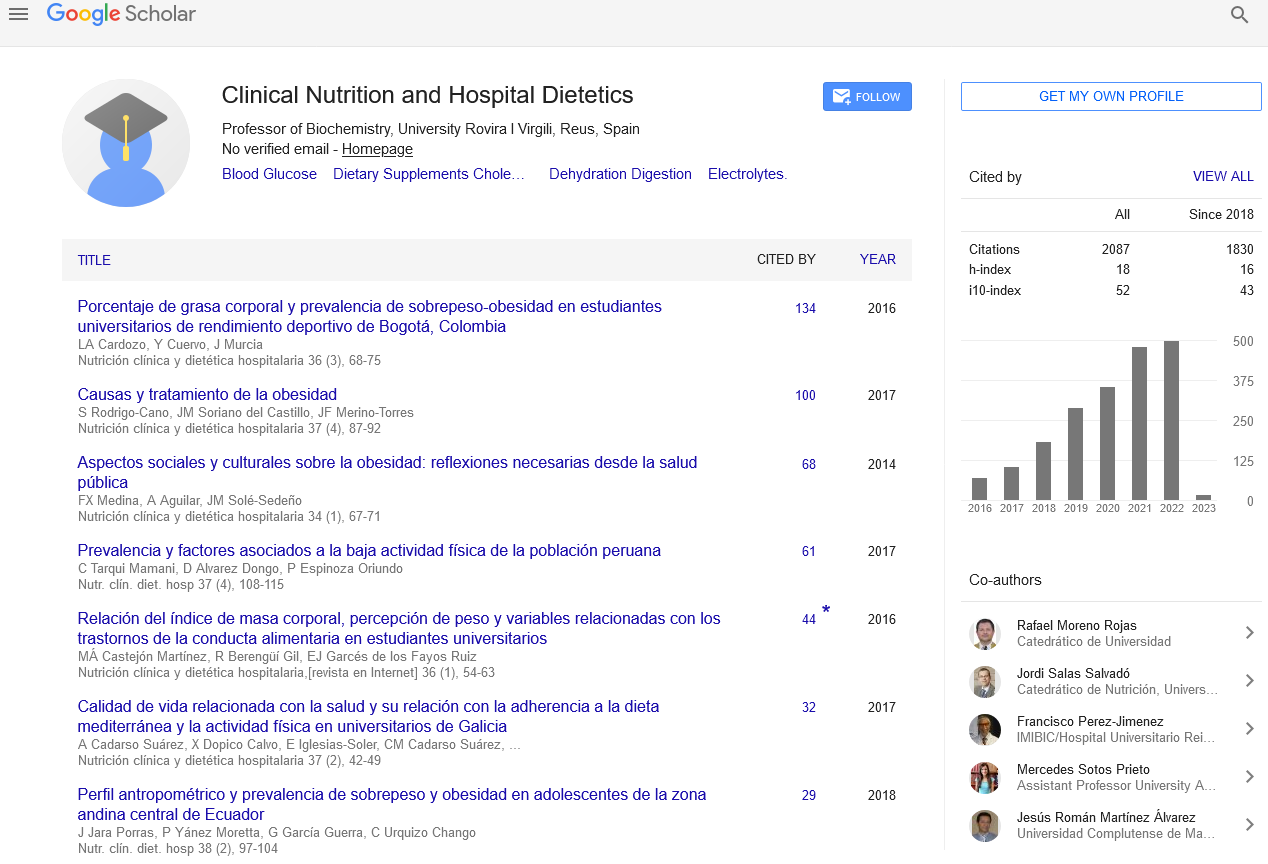

Google Scholar citation report

Citations : 2439

Clinical Nutrition and Hospital Dietetics received 2439 citations as per google scholar report

Indexed In

- Google Scholar

- Open J Gate

- Genamics JournalSeek

- Academic Keys

- JournalTOCs

- ResearchBible

- SCOPUS

- Ulrich's Periodicals Directory

- Access to Global Online Research in Agriculture (AGORA)

- Electronic Journals Library

- RefSeek

- Hamdard University

- EBSCO A-Z

- OCLC- WorldCat

- SWB online catalog

- Virtual Library of Biology (vifabio)

- Publons

- MIAR

- Geneva Foundation for Medical Education and Research

- Euro Pub

- Web of Science

Journal Highlights

- Blood Glucose

- Dietary Supplements

- Cholesterol, Dehydration

- Digestion

- Electrolytes

- Clinical Nutrition Studies

- energy balance

- Diet quality

- Clinical Nutrition and Hospital Dietetics